Written by the Short Drama Lab team. We translate short drama content across TikTok, YouTube Shorts, and Instagram Reels—covering romance, action, comedy, and drama genres in multiple language pairs. Every tool and workflow in this guide was tested on actual clips in February–March 2026, including scenes with fast dialogue, overlapping speakers, and heavy emotional beats.

Written by the Short Drama Lab team. We translate short drama content across TikTok, YouTube Shorts, and Instagram Reels—covering romance, action, comedy, and drama genres in multiple language pairs. Every tool and workflow in this guide was tested on actual clips in February–March 2026, including scenes with fast dialogue, overlapping speakers, and heavy emotional beats.

Which Method Fits Your Situation?

Start here before reading anything else. The right method depends on your content type, publishing frequency, and how much accuracy matters for a given clip:- Testing reach → Platform auto-translate (TikTok/YouTube) — free, instant, good enough to gauge audience interest before investing

- Regular creator → AI tool + quick review — best balance of speed, quality, and cost for most workflows

- Emotional or complex content → AI draft + native speaker review — adds cultural accuracy without paying full professional rates

- Licensed or official releases → Professional service (Rev, Gengo, Viki) — certified output, broadcast-ready quality

4 Methods to Translate Short Drama Videos

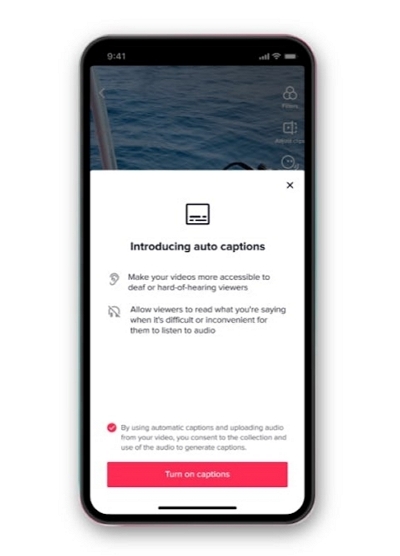

Method 1: Platform Built-In Tools

YouTube, TikTok, and Instagram Reels all offer free auto-captioning and auto-translation. Upload your clip, enable captions, and the platform generates subtitles in 100+ languages within minutes—no setup required. In our testing, accuracy on clean, straightforward dialogue was reasonable. Accuracy on dramatic scenes with emotional delivery, slang, or overlapping dialogue dropped significantly—often producing literal translations that sounded robotic or missed the tone entirely. Use this method to test whether a new language market is interested in your content before investing in better translation. Don't publish it as your final product for content where tone matters.

✅ Free, fast, accessible to everyone ❌ Limited accuracy, weak with emotional or idiomatic dialogue

Use this method to test whether a new language market is interested in your content before investing in better translation. Don't publish it as your final product for content where tone matters.

✅ Free, fast, accessible to everyone ❌ Limited accuracy, weak with emotional or idiomatic dialogue

Method 2: AI-Powered Translation Tools ⭐ Recommended for Most Creators

Dedicated AI tools go further than platform tools: they transcribe, translate, sync subtitle timing to the audio, and often offer voice dubbing. In our tests, modern AI tools handled contemporary drama dialogue well—emotional phrasing, common idioms, and rapid exchanges. Comedy relying on wordplay and historical drama with archaic language still needed a human review pass. Cost is typically $10–30/month for unlimited clips. The key difference between tools is dubbing quality—if you want voiced translations rather than subtitles, test a few clips on free trials before committing. Mediaio Video Translator consistently produced the best results on emotional scenes in our testing. ✅ Fast, affordable, supports dubbing, handles dramatic content well ❌ Cultural nuances may need manual reviewMethod 3: AI + Native Speaker Review

Run the clip through an AI tool for the initial translation, then hire a native speaker for a focused 1–2 hour review. On Fiverr or Upwork this costs $15–40 per clip. You get near-professional accuracy at a fraction of full professional rates. This is what we use for emotionally pivotal scenes, comedy, and any content where cultural nuance directly affects audience retention. ✅ High accuracy, strong cultural adaptation ❌ Adds 1–2 days turnaroundMethod 4: Professional Translation Services

Agencies like Rev, Gengo, and Viki employ translators who specialise in media content and understand drama conventions. Accuracy is highest; turnaround is 3–7 days; cost is $100–500 per video. Right for licensed releases, branded content, and anything requiring certification—not practical for creators publishing daily. ✅ Broadcast-ready quality, certified output ❌ Expensive, slow turnaroundSide-by-Side Comparison

The Translation Workflow: Step by Step

This workflow applies regardless of which tool you use. Steps 1–3 are universal preparation; Steps 4–7 walk through the full Mediaio Video Translator workflow as the recommended tool for most creators.Before You Upload: Prepare Your Audio

AI translation accuracy depends heavily on audio clarity. Background music mixed into the dialogue track at high volume causes AI tools to miss speech boundaries, producing mistimed or missing subtitles. Fix this before anything else: export a dialogue-only mix if possible, or use a noise reduction tool to reduce background music. This single step improves output quality more than any tool setting.Before You Configure: Decide on Subtitles or Dubbing

Subtitles are faster to produce and easier to review. Dubbing sounds more immersive but takes longer and costs more. For Spanish and Portuguese-speaking audiences, dubbing typically outperforms subtitles in retention. For English audiences watching foreign content, subtitles are standard and expected. Recommendation: Start with subtitles. Test audience engagement. Only add dubbing to your top-performing clips once you've confirmed the language market responds.Step-by-Step: Translating a Short Drama Clip with Mediaio Video Translator

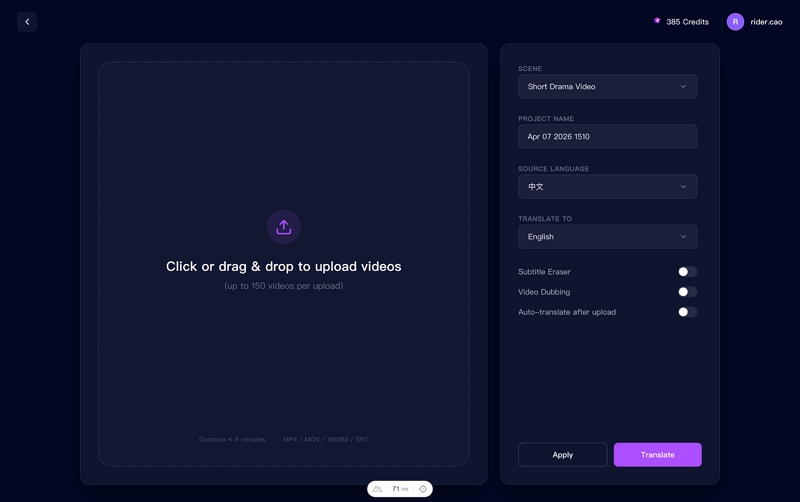

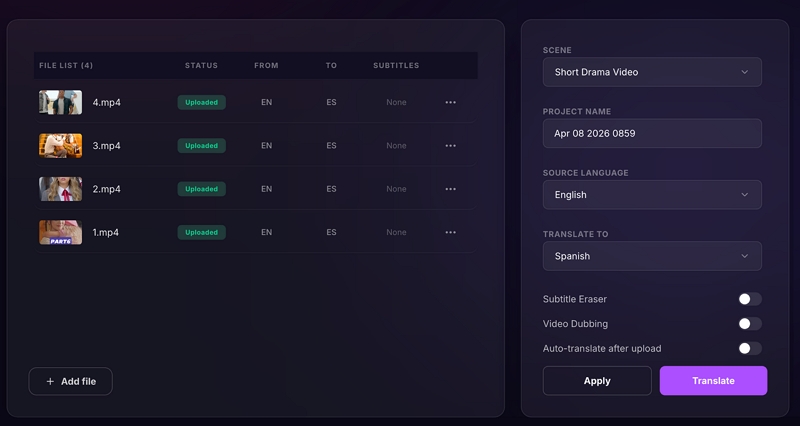

Go to Mediaio Video Translator and upload your drama clip. Supported formats include MP4, MOV, and AVI.

Set your source and target languages.

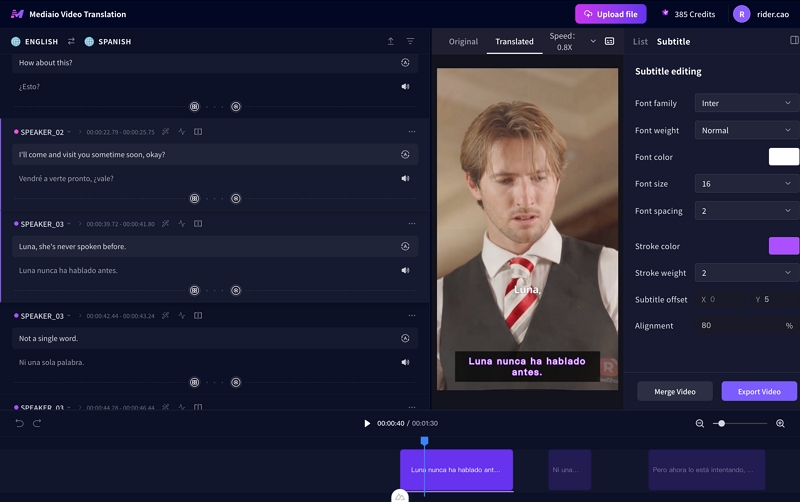

Once processing is complete (typically 5–10 minutes), Mediaio Video Translator opens an inline editing interface.

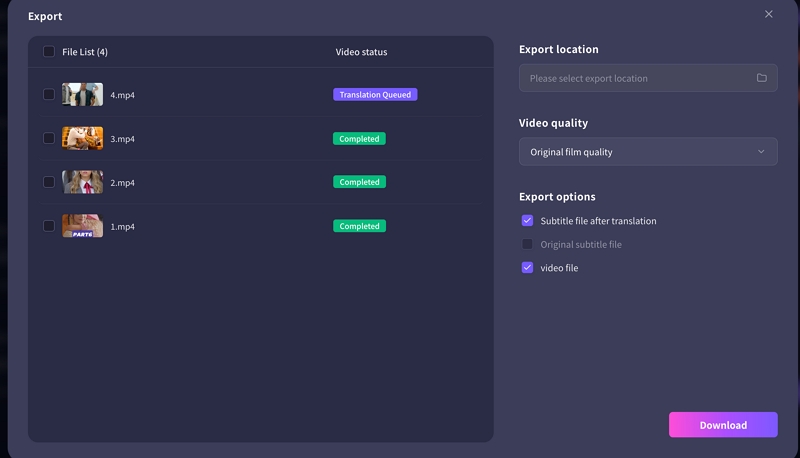

Export format depends on where you're publishing. YouTube accepts SRT uploads as a separate subtitle track (upload via YouTube Studio → Subtitles → Add language → Upload file)—this keeps subtitles indexable by YouTube's search algorithm and lets viewers toggle them on or off. TikTok and Instagram Reels require burned-in subtitles embedded directly in the video, since neither platform supports external subtitle files. Export both versions if you're publishing across platforms. Before posting anywhere, watch the exported clip on your phone: subtitle text that looks fine on a laptop often overlaps or becomes unreadable on a 6-inch screen, especially during fast exchanges.

The Four Challenges That Break Most Translations

These are the specific failure points we encountered most frequently in testing. Each has a concrete fix. Slang and colloquialisms. Slang rarely translates word-for-word without sounding robotic or losing meaning entirely. The fix is localized equivalents that carry the same tone and social weight—not a literal swap. "Don't ghost me" needs a culturally familiar alternative in the target language, not a direct translation. Emotional dialogue losing impact. Dramatic scenes depend on rhythm, tension, and character chemistry. When translated too literally, emotional lines flatten. Prioritize tone over exact wording: shorter, sharper lines for confrontations; softer phrasing for vulnerable moments. Read the line aloud in the target language—if it doesn't feel right, rewrite it. Text too long for quick scenes. Short dramas feature rapid exchanges where long translated lines won't fit on screen without overwhelming the viewer. Condense while keeping the core meaning: trim filler words, split lines across natural speech beats, and target 42 characters per line maximum on mobile. Multiple speakers talking simultaneously. A single centered subtitle line for two speakers is one of the most common readability failures in drama translation. Fix it with color-coded subtitles, speaker labels ([MAYA] at the start of the line), or staggered positioning—one speaker at the top of the frame, one at the bottom.Subtitle Styling for Drama Content

Styling determines whether viewers can actually read your subtitles—especially on mobile. These rules come from testing across TikTok, YouTube Shorts, and Instagram Reels. Font: bold sans-serif, not decorative. Clean fonts like bold Helvetica or Noto Sans read best on compressed mobile video. Decorative or script fonts fail at small sizes. Bold sans-serif is the right call every time. Color: white text with dark outline or semi-transparent background. White text on bright scenes becomes invisible. Add a black outline (1–2px) or a semi-transparent dark background box behind the subtitle. This works across both bright and dark scenes. Position: bottom-centre by default, top for dubbed versions. Bottom-centre is the standard for subtitled content. If you've used voice dubbing, consider top placement so subtitles don't cover facial expressions during emotional scenes—which is exactly when viewers need to see the actor's face. Line length: 42 characters maximum per line on mobile. Anything longer wraps awkwardly or gets cut off. Count characters, not words. "I don't want to talk about this right now" is 42 characters—that's the ceiling.Mistakes That Hurt More Than Bad Translation

Publishing without reviewing any scenes. AI generates a complete subtitle file and it feels done. Publishing without checking even the emotional peak scenes is the single biggest quality failure we see. It takes 10 minutes to check three key scenes. Do it every time. Using the same subtitle style across all target languages. German lines are significantly longer than English equivalents. Spanish often needs more words than Mandarin for the same idea. Font size, line breaks, and timing may need adjusting per language—not just per clip. Uploading a clip with noisy audio and expecting clean output. Every AI tool is limited by audio quality. Background music above dialogue level, wind noise, and low-bitrate audio all degrade transcription accuracy before translation even starts. Fix audio first, every time. Inconsistent character names across episodes. "Xiao Wei" in episode one becoming "Wei Wei" and then "Little Wei" across a series confuses viewers and hurts retention. Lock character name formats before translating episode one, then keep a reference document for the full series. Checking subtitles on desktop only. Short drama audiences watch on phones. Subtitle readability, timing, and line breaks look very different on a 6-inch screen than on your editing monitor. Final check on mobile, every time—no exceptions. One extra check worth doing: After publishing, read your first 20–30 comments in the new language. Native speakers will tell you immediately if something sounds off—this feedback loop is faster and more accurate than any internal review process.Common Questions

With an AI tool like Mediaio Video Translator: 15–25 minutes total—roughly 10 minutes for processing and 10–15 minutes for your review pass on key scenes. With the hybrid method (AI + human reviewer): add 1–2 days for reviewer turnaround. Professional services add 3–7 days. For a series, both timelines compress once reviewers become familiar with the characters and tone.

It depends on the target market. Spanish and Portuguese-speaking audiences—particularly in Latin America—engage better with dubbed content. English-speaking audiences consuming foreign-language drama expect and accept subtitles. French and German audiences are split. Test both formats on your highest-performing clip before building a full dubbing workflow. Poor dubbing (robotic voices, mistimed audio) hurts retention more than subtitles, so only switch to dubbing when your tool produces natural-sounding output.

Almost always audio quality, not the tool. AI tools calculate subtitle timing from speech detection—heavy background music makes it hard to identify where speech starts and ends, pushing everything slightly late. Fix: reduce background music volume in the source file before uploading to Mediaio Video Translator, or use a manual timeline editor to shift all subtitles together as a block rather than adjusting them individually.

Keep the original romanized pronunciation for character names—don't translate them. Do translate titles and forms of address contextually: a character called "总裁" should appear as "CEO" or "Director," not as a romanization that means nothing to your target audience. On a character's first appearance, add a brief identifier in parentheses and then just use the name from that point forward. Viewers adapt quickly to unfamiliar names; inconsistency is what creates confusion.

Check your analytics before choosing. YouTube Studio and TikTok both show country-level data for views that didn't come from your primary language audience—if you're seeing meaningful traffic from Brazil without any Portuguese content, that's your signal. As a general starting point: Spanish (largest reach for most creators), Brazilian Portuguese (high engagement with drama content), and Hindi (fast-growing short-form audience) consistently outperform other language markets for drama genres. But your own data should drive the decision.